Open media

When we say HomeGenie can transform natural language into complex, full-stack applications, we mean it. For this experiment, we wanted to see if the Widget Genie could handle something computationally heavy and hardware-intensive: real-time audio processing and physical light synchronization.

We didn't just want a pretty graph; we wanted a "Neural Engine" that could listen to the environment and make the smart home dance.

The journey started with a simple prompt to generate the user interface:

"Create a 'sound visualizer' widget that uses microphone input to 'visualize' sound with some cool synchronized animation."

The Genie instantly wrote a production-grade component using the Web Audio API and a <canvas> element to draw a reactive frequency spectrum. But we wanted more. We asked it to add a 3D effect.

In a matter of seconds, the AI integrated the Three.js library, creating a dual-mode visualizer. Users can now toggle seamlessly between a classic 2D spectrum and a dynamic, rotating 3D ring of reactive pillars.

A visualizer on a screen is great, but a true smart home application interacts with the physical world. We asked the Genie to build a backend C# program to synchronize our smart lights with the music.

"Now, let's create a program that implements an API endpoint to let this widget send data that can then be used by the program to control lights synchronizing them to the music..."

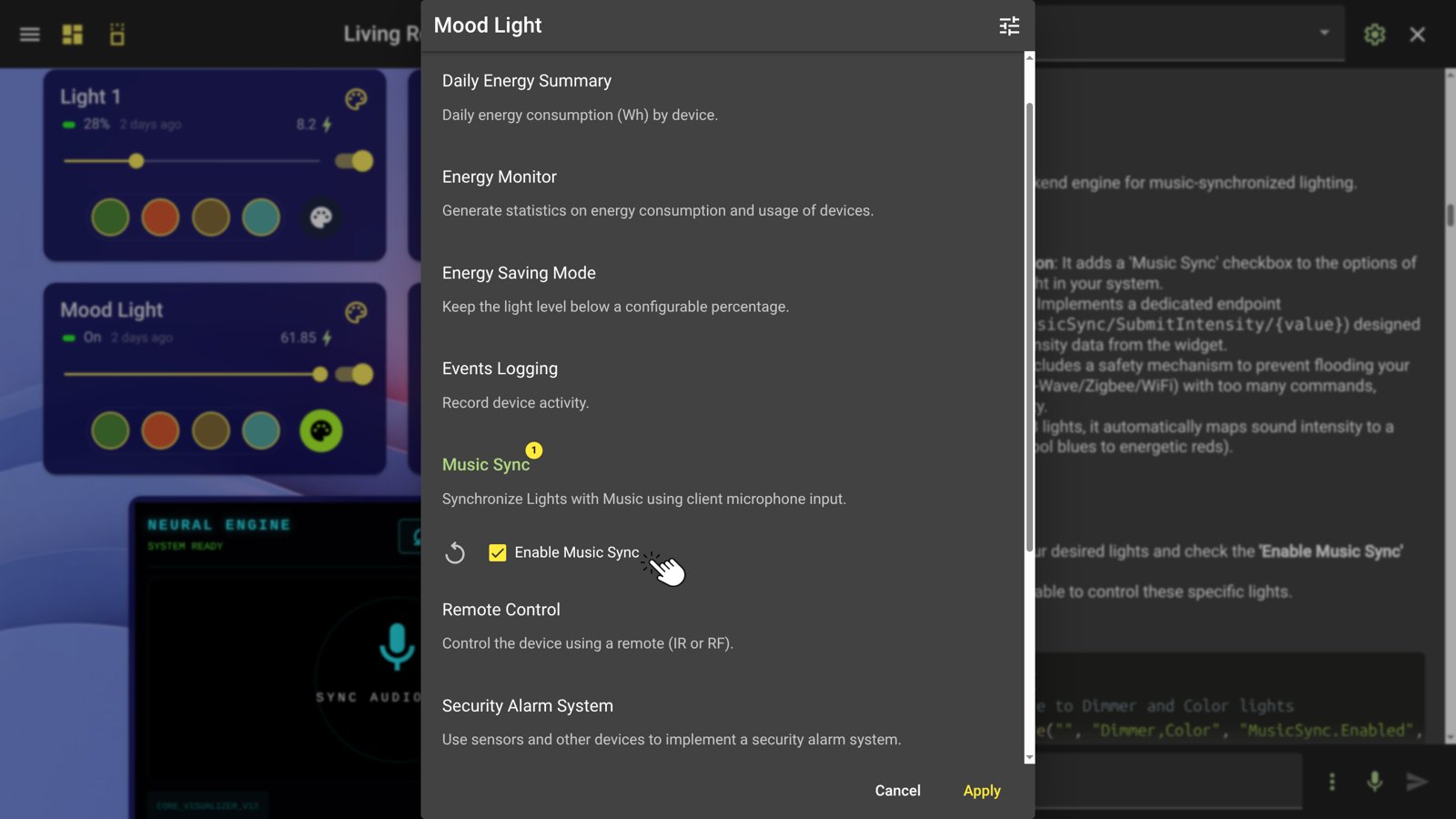

The AI not only generated the C# backend API (HomeAutomation.MusicSync/SubmitIntensity) but also added a Dynamic Feature Injection. It automatically added an "Enable Music Sync" checkbox to the settings of every Dimmer and Color light in the HomeGenie system.

This is where Vibe Coding truly shines. Initially, the lights were reacting, but the sync felt "off." The bass was overpowering the higher frequencies.

Instead of manually debugging complex math, we simply told the AI what we observed:

"The first light is going very good, nice, but the second is kind of low values, the 3rd and 4th I see data pulsing but they are stuck at 0."

The AI immediately understood the acoustic physics problem. It autonomously rewrote the analysis logic, implementing Adaptive Logarithmic Mapping and Peak Detection. It shifted the frequency ranges, applied sensitivity boosts to the treble bands, and lowered the smoothing constant.

We then asked for one final touch: "The colors should rotate/animate." The Genie updated both the frontend and backend to implement a synchronized Global Color Rotation Engine, making the lights flow through a vibrant rainbow spectrum while still pulsing to the beat.

Building a real-time, 4-band spectral analysis engine with a 3D UI and a C# hardware-sync backend would normally take a senior developer days of work.

With HomeGenie's Widget Genie, it took a series of conversational prompts and immediate, live-reloading feedback. You don't write syntax; you describe the experience, refine the behavior, and let the AI handle the complexity.

Did you know that HomeGenie widgets are 100% portable and can be embedded into any external web page? You can learn how to export them in the Programming Widgets introduction.

Interact with the live, AI-generated component right here:

Want to turn your dashboard into a Neural Audio Engine?